By Palme Research and Training Consultants | April 2026

Receiving a Major Revision decision from a peer-reviewed journal can feel like a rejection, even when it is not. In most cases, however, it is an invitation to return, on condition that the manuscript is revised in ways that satisfy expert readers who have identified weaknesses serious enough to delay acceptance, but not serious enough to end consideration altogether. Many researchers understand this in principle. What often proves far more difficult is the response itself. It is one thing to know that revisions are required. It is another to work through two dozen reviewer comments, judge which criticisms are valid, decide where to revise, where to clarify, and where to hold the line, then present those decisions in a form that an editor can assess quickly and fairly.

The peer review response is among the most technically demanding forms of academic writing. It is also one of the least explicitly taught.

A good response document does more than answer comments. It demonstrates judgement. It shows that the authors can distinguish between criticisms that expose a genuine weakness, requests that deserve a measured adjustment, and suggestions that should not be adopted because they would distort the study’s design, exceed its stated scope, or misrepresent what the manuscript actually set out to do.

This article outlines a practical framework for responding to reviewer comments in a way that strengthens the paper where revision is needed, protects the argument where it remains defensible, and makes the revision process legible to editors.

A useful starting point is to recognise that most reviewer comments fall into one of three practical response paths. Some should be accepted outright. Others should be addressed in part, because the comment has merit but full adoption would create a different paper from the one submitted. A smaller number should be declined, politely but clearly, because they are based on a misreading, lie outside the scope of the study, or conflict with the manuscript’s declared methods.

To accept a comment is to recognise that the reviewer has identified a genuine weakness. The response should acknowledge the point directly, describe the revision that was made, and indicate where the change now appears in the manuscript. If new references were added, those should be named.

A partial acceptance requires more care. It signals that the reviewer has raised a legitimate issue, but that a full revision would compromise the study’s design, pre-specified methods, or substantive boundaries. In these cases, authors should revise as far as the paper can properly support, then explain why the revision stops there. Editors are rarely persuaded by vague statements that a concern has been “partially addressed.” They need to see both the substance of the revision and the reason its limits were necessary.

A respectful decline is also a legitimate response in some cases. What matters is not tone alone, but justification. If a comment is out of scope, rests on a misreading, or asks the manuscript to become something it was never intended to be, the authors should say so with precision. The strongest basis for doing this is evidence, whether from the manuscript itself, from methodological guidance, or from the journal’s own instructions.

Before drafting responses, it helps to classify comments by type. In practice, the most useful categories are major concerns, minor concerns, technical corrections, clarification requests, and comments that fall outside the study’s scope.

Major concerns are the issues most likely to have shaped the editorial decision. These affect the methods, results, argument, or interpretation in a substantive way. If the editor highlights particular matters in the decision letter, those should usually be treated as major concerns regardless of how they were framed by the reviewers.

Minor concerns still require revision, but they do not threaten the paper’s central contribution. These often involve clarity, organisation, missing definitions, limited methodological detail, or inconsistencies across sections.

Technical corrections are narrower and should usually be handled directly. These include typographical errors, factual corrections, citation inconsistencies, statistical mistakes, or formatting issues.

Clarification requests often arise because the manuscript has not said enough, not because the underlying analysis is wrong. In many such cases, the solution is a clearer explanation in the response and a targeted revision in the manuscript.

Out-of-scope comments require a firmer form of reasoning. The authors should acknowledge what the reviewer appears to have expected, explain why that expectation does not match the study’s stated aims, and point to the section where the scope was defined.

Once comments have been classified, they should be assigned a simple reference code. A format such as R1C1 for Reviewer 1, Comment 1, then R1C2, R2C3, and so on, is usually sufficient. This makes it easier to track whether every comment has been addressed and allows editors to verify the relationship between the response table and the revised manuscript. The coding system need not appear inside the manuscript itself unless that suits the author’s internal workflow or the journal’s submission process. What matters is traceability.

The point-by-point response table remains the central accountability document in most revisions. It should identify the reviewer, the comment number, the relevant manuscript section, the type of comment, the reviewer’s point quoted or accurately summarised, the decision taken, and the change made. Where new sources were added to support a revision, those should also be noted.

Order matters. Major concerns should appear first, because they are the comments most likely to influence the editor’s final judgement. Minor concerns can follow, then technical corrections, clarification requests, and any comments that were declined as out of scope. A well-structured table does not guarantee a positive editorial decision, but it does reduce friction. It helps the editor see that the revision has been approached systematically and in good faith.

The formal response letter to the editor should remain brief and restrained. Its purpose is not to argue for acceptance. The revised manuscript must do that work. The letter should thank the editor and reviewers, state that the manuscript has been revised in light of the comments received, summarise the most important changes, and note transparently where some suggestions were not fully adopted. Where comments were declined, the letter can indicate that full justification appears in the response table.

One difficulty that deserves explicit mention is reviewer conflict. At times, two reviewers ask for incompatible things. One may call for substantial shortening of a section, while another asks for more detail in the same place. One may request an additional analysis, while another questions the value of the existing analysis. The worst response is to satisfy one reviewer silently and ignore the tension. A better approach is to identify the conflict openly, explain the decision that was taken, and state why that decision better serves the paper. Editors are more likely to trust authors who show that they recognised the tension and resolved it deliberately.

At this point, the process can still sound manageable. For a short paper with a modest number of comments, it often is. The difficulty becomes more obvious when the revision is large, when comments conflict, when new literature must be found quickly, or when manuscript changes need to be traced across multiple sections and several rounds of review. What begins as a straightforward exercise in academic judgement can become an administrative and analytical burden. Comments get missed. Revisions become hard to verify. New sources are added without a clear line of logic. The response table grows, but coherence weakens.

That is where a framework stops being merely conceptual and becomes an operational problem.

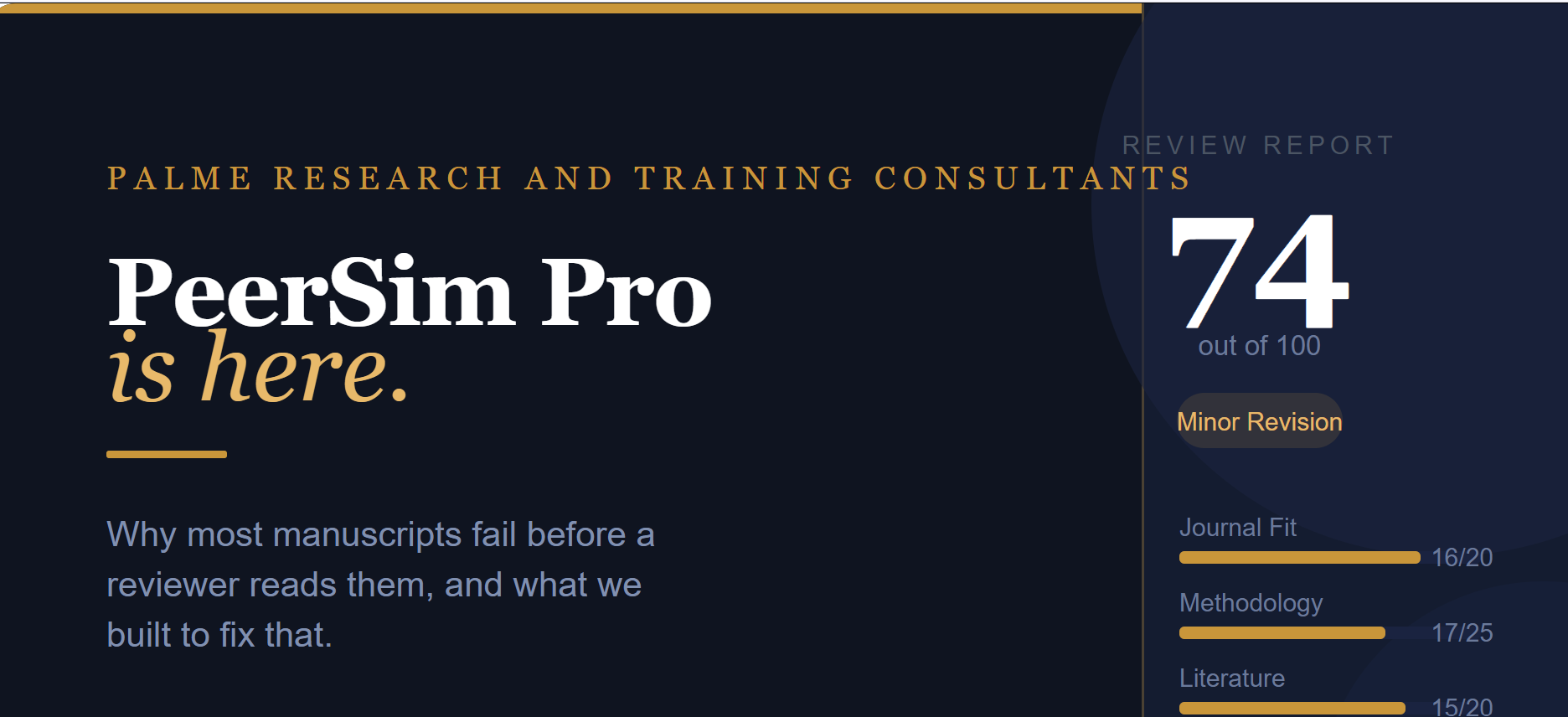

PeerSim Pro was built for that part of the workflow. It supports the full peer review response process by extracting and classifying reviewer comments, assisting with targeted literature searches for substantive concerns, helping restructure the manuscript around accepted revisions, and generating the core revision documents needed for resubmission. These include the point-by-point response table and the formal response letter. The aim is not to replace scholarly judgement. It is to make that judgement easier to organise, trace, and execute under real revision pressure.

Used well, PeerSim Pro helps authors move from reactive comment handling to a more disciplined revision process. Instead of responding piecemeal, they can work from a structured system that makes editorial expectations easier to interpret and easier to meet.

No framework will remove the need for intellectual judgement. Journal instructions still matter. Disciplinary norms still matter. Reviewer comments still need to be read carefully and weighed on their own terms. Even so, authors who approach revision with a clear decision logic, a traceable response structure, and an operational system for managing complexity are in a far stronger position than those who revise comment by comment without an overall method.

Major revision is not simply a test of whether a paper can be changed. It is a test of whether the authors can revise with precision, defend their choices with evidence, and present those choices in a way that an editor can trust. That is the standard PeerSim Pro was designed to support.